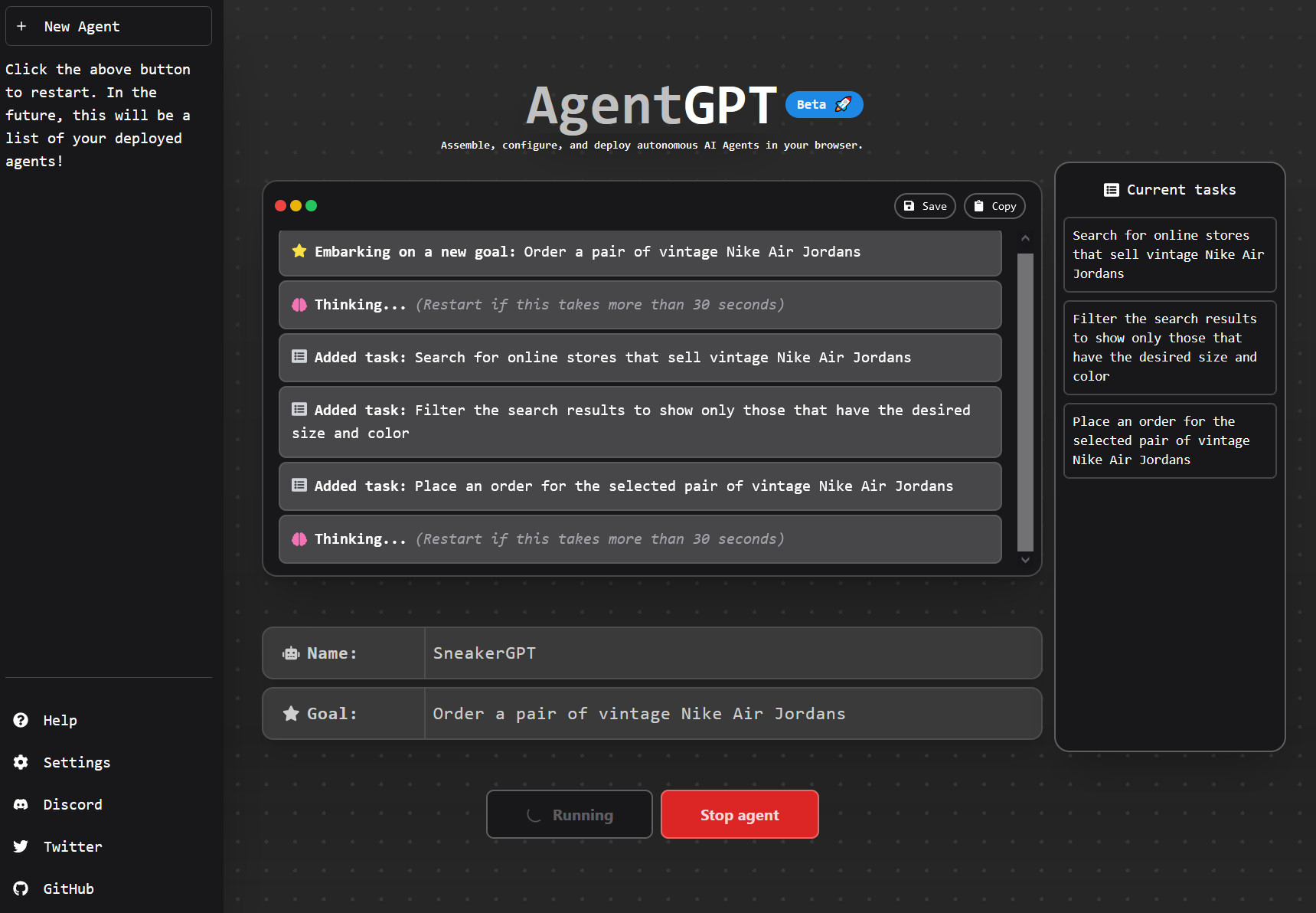

Since the launch of OpenAI’s GPT-4 API last month to beta testers, a loose group of developers has been experimenting with making agent-like (“agentic”) implementations of the AI model that attempt to carry out multistep tasks with as little human intervention as possible. These homebrew scripts can loop, iterate, and spin-off new instances of an AI model as needed.

Two experimental open source projects, in particular, have captured much attention on social media, especially among those who hype AI projects relentlessly: Auto-GPT, created by Toran Bruce Richards, and BabyAGI, created by Yohei Nakajima.

What do they do? Well, right now, not very much. They need a lot of human input and hand-holding along the way, so they’re not yet as autonomous as promised. But they represent early steps toward more complex chaining AI models that could potentially be more capable than a single AI model working alone.

“Autonomously achieve whatever goal you set”

Richards bills his script as “an experimental open source application showcasing the capabilities of the GPT-4 language model.” The script “chains together LLM ‘thoughts’ to autonomously achieve whatever goal you set.”

Basically, Auto-GPT takes output from GPT-4 and feeds it back into itself with an improvised external memory so that it can further iterate on a task, correct mistakes, or suggest improvements. Ideally, such a script could serve as an AI assistant that could perform any digital task by itself.

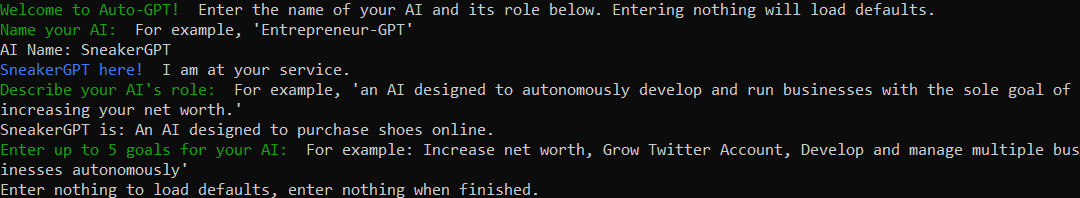

To test these claims, we ran Auto-GPT (a Python script) locally on a Windows machine. When you start it, it asks for a name for your AI agent, a description of its role, and a list of five goals it attempts to fulfill. While setting it up, you need to provide an OpenAI API key and a Google search API key. When running, Auto-GPT asks for permission to perform every step it generates by default, although it also includes a fully automatic mode if you’re feeling adventurous.

Loading comments...

Loading comments...